Doubling down on my Vim usage lately, getting to know some of the dot motions really feels like a superpower. I hate to say I’ve drunk the Kool-aid but I think I’m getting there.

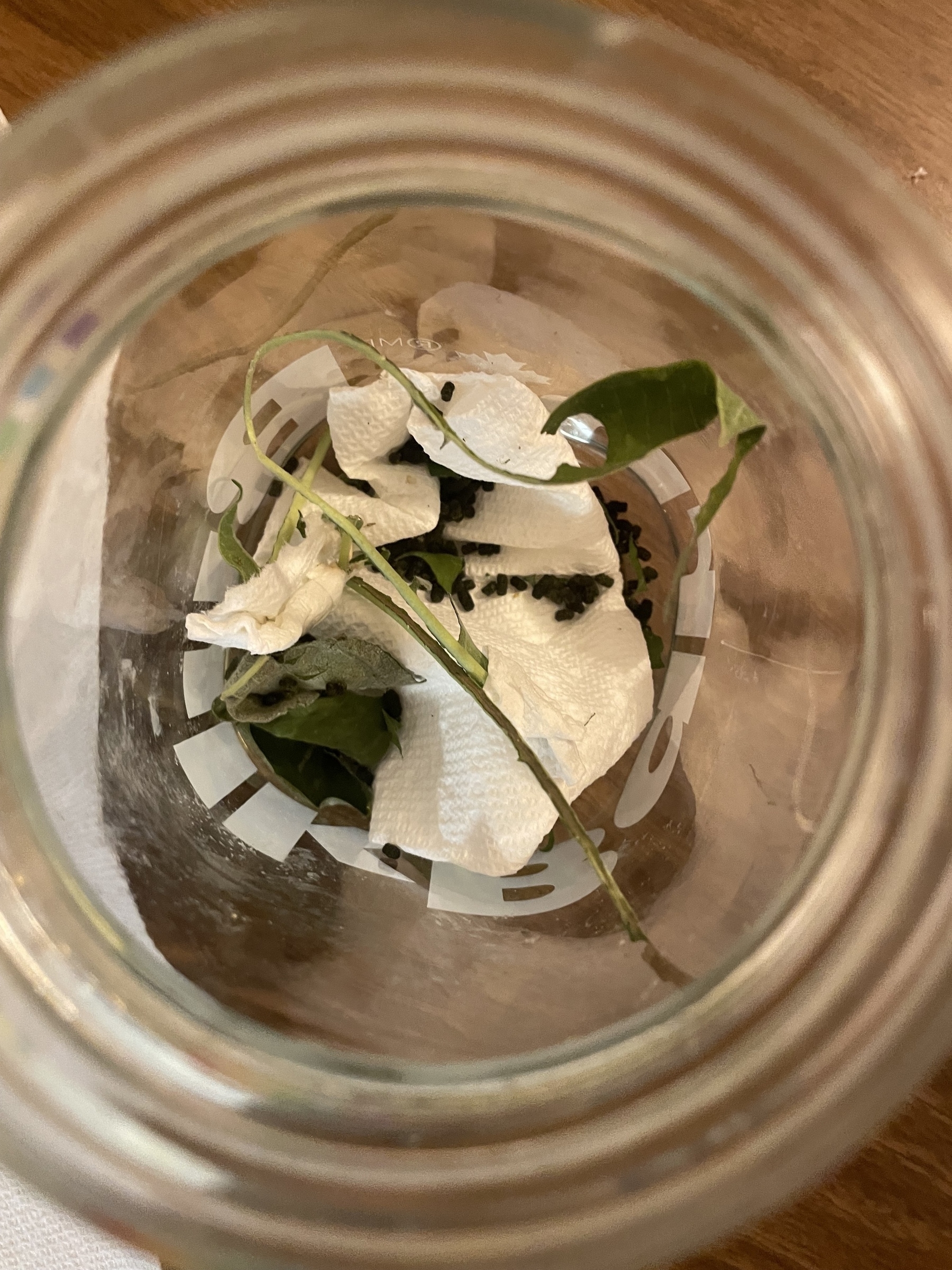

My daughter found two monarch caterpillars on our milkweed plants which has kicked off an amazing journey.

Its wild how much they eat and also how fast they grow.

Finished reading: A Different Kind of Power by Jacinda Ardern 📚

I liked this book a lot, and I like Ardern a lot. I know she’s not overly popular in New Zealand, but all the negative review’s I’ve read center on mask mandates and required vaccinations which I have no issues with. I’d be curious to hear from come Kiwis on about this.

Simplicity in Editors

Two things I’ve read/seen recently which have served as a huge inspiration to me:

Mitch Hashimoto talking about his austere editor setup. And yobibyte talking about his plugin free neovim setup.

These talks inspired me to make the full jump over to Neovim. Admittedly, I’m using LSPs and Treesitter a few other plugins but it’s a big step back from the full featured setup of Zed or Sublime that I was running before. I’m still in the “this is hard” phase but I think that moving a bit slower and focusing on each line as opposed to all the jumping around I did before will, ultimately, give me a deeper understanding of the codebase and the discipline to keep bigger mental models in my head.

Apparently Netanyahu is telling residents of Gaza to “leave now”. Sorry, but where the hell are they supposed to go? They’ve been blocked from leaving for 20 years.

I work from home, in the basement; my 1 year old cries whenever I go downstairs. My wife has to stand with her at the top of the stairs and they wave me down as I go. It feels like leaving for the office but like 10 times a day.

Is learning Vim actually faster if you then spend multiple hours a week tweaking your Neovim config?

From the morning walk.

Finally took the plunge and decided to start paying for Micro.one… Very good chance I will upgrade to the full service soon.

Before sending that email to hundreds of thosuands of customers… ask yourself, is announcing “we now have dark mode” broadcast worthy?